That makes it a useful diagnostic layer. Even before agent-assisted shopping becomes mainstream, it gives e-commerce teams a faster way to see where core journeys break, where false success signals appear, and where the buying experience depends too much on user patience. This is the main reason why the topic matters now.

I presented this research at Above Commerce 2026 in Zrenjanin, where Granular joined as an event partner. The broader conversation at the event was about where e-commerce is moving next, how user behavior is shifting, and what teams should prepare for before those changes become obvious in reporting. This research fits that context well because it sits right between AI, UX, search, and conversion reality.

What I presented there was not a claim that AI shopping is already mainstream in Serbia. It was simpler and more useful: if an AI agent consistently struggles with the same on-site patterns, those patterns deserve attention now, because they are already creating friction for real buyers.

Methodology: how I ran the test

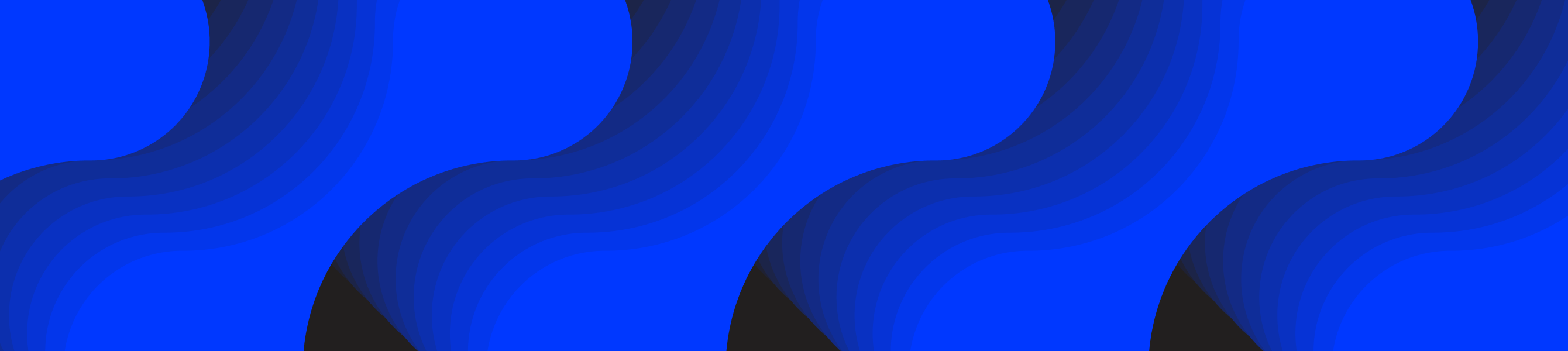

I used an AI browser agent to simulate shopping behavior across Serbian web shops. The test covered 50 e-commerce stores and 10 scenarios. Each step captured the current URL, the agent’s action, its reasoning, a screenshot, and the page HTML. That gave me a way to review not just what the agent did, but why it thought it was making progress.

An example of a web shop visit

The scenarios were split into two groups.

- Detailed scenarios gave the agent very explicit instructions. These were tasks such as finding a specific type of product in a specific shop and following a more controlled path.

- Minimal scenarios were closer to real user intent. The agent got a goal, a budget, and a few constraints, then had to figure the rest out on its own.

There were also scenarios that started from Google, where the agent had to choose the shop itself before continuing the task.

This matters because detailed scenarios test whether a flow can be completed under guidance. Minimal scenarios test whether the shop works when the intent is clear, but the path is not. That second layer is where a lot of friction showed up.

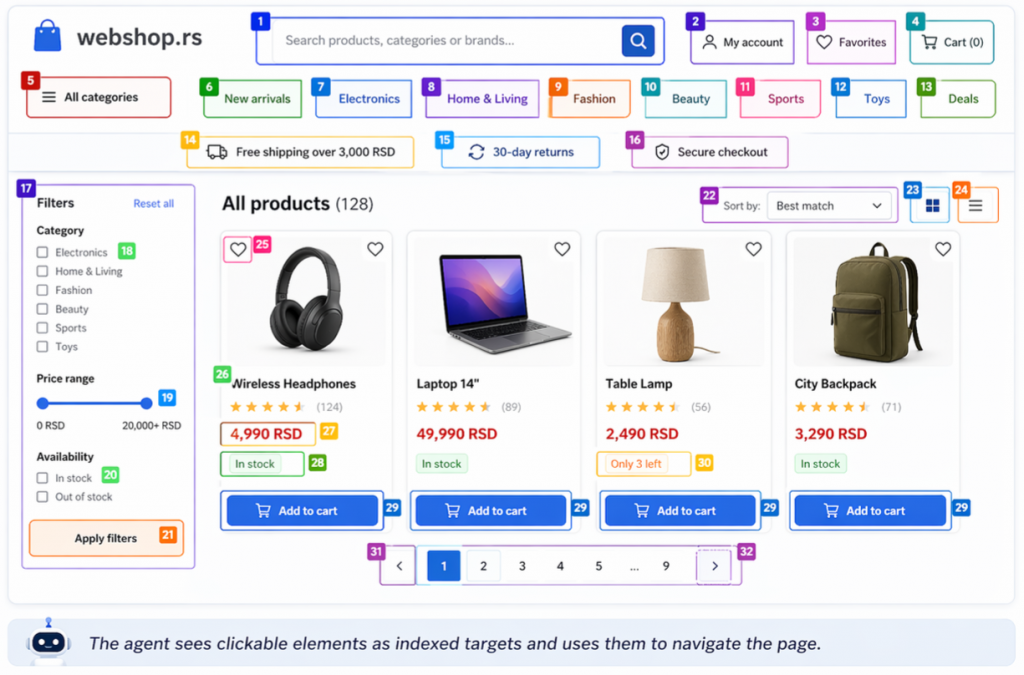

The first result that matters: 100% reported success, 59% real success

This was the clearest signal in the entire test.

Across all of the runs, the agent marked every run as a success. On paper, that looks perfect, but after manual review, the real success rate was 59%. In the rest of the runs, the task was not actually completed in the way it was supposed to be. The agent either added the wrong product, selected an unavailable item, got stuck in friction, or interpreted a weak outcome as good enough.

Success comparison

That gap matters for two reasons.

First, it shows how easy it is to confuse flow completion with task completion. A system can generate a positive signal while the user journey is still broken.

Second, this is not only an AI story. It is also a measurement story. Many digital teams already deal with a softer version of the same problem: a tool reports progress, but the actual user outcome is weaker than the reporting suggests. In that sense, AI agents are useful because they make false positives easier to spot.

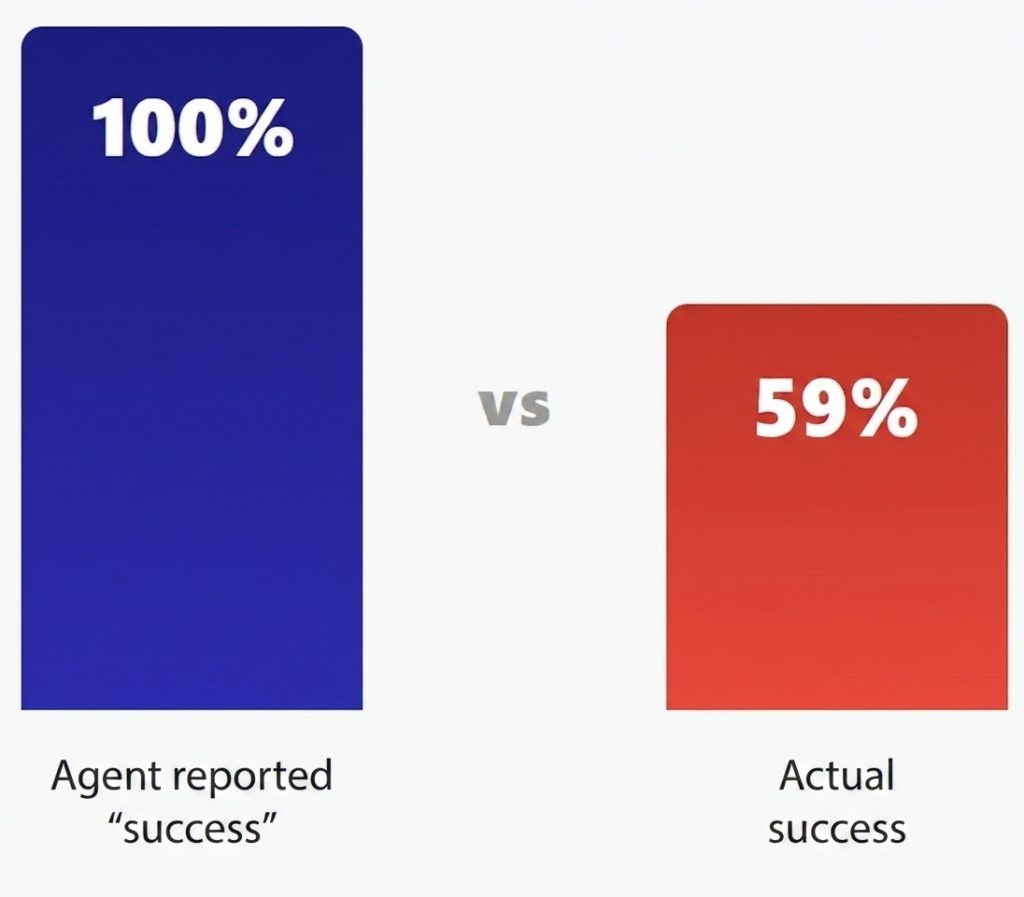

Three levels of web shop readiness became obvious

After reviewing the runs, three broad quality levels emerged.

Three levels of quality

Tier 1: Smooth

- The flow works with very little resistance.

- Search behaves predictably.

- Product pages are clear.

- Adding to cart is obvious.

- Confirmation is visible.

- The user or agent does not have to second-guess whether the action actually worked.

Tier 2: Friction

- The flow is technically possible, but it takes too many extra steps.

- Search is weaker.

- Feedback is less clear.

- Popups interrupt the path.

- The task gets completed, but with more effort than it should require.

Tier 3: Fail

- The flow breaks before the real task is completed.

- A pop-up blocks the experience.

- Search does not understand the request.

- A login wall appears too early.

- The agent keeps trying, but the structure of the journey does not support the goal.

This was one of the most useful ways to think about the results because not every problem means total failure. Many shops are not broken, they are simply harder to use than they need to be, and that friction compounds.

Five friction patterns kept showing up

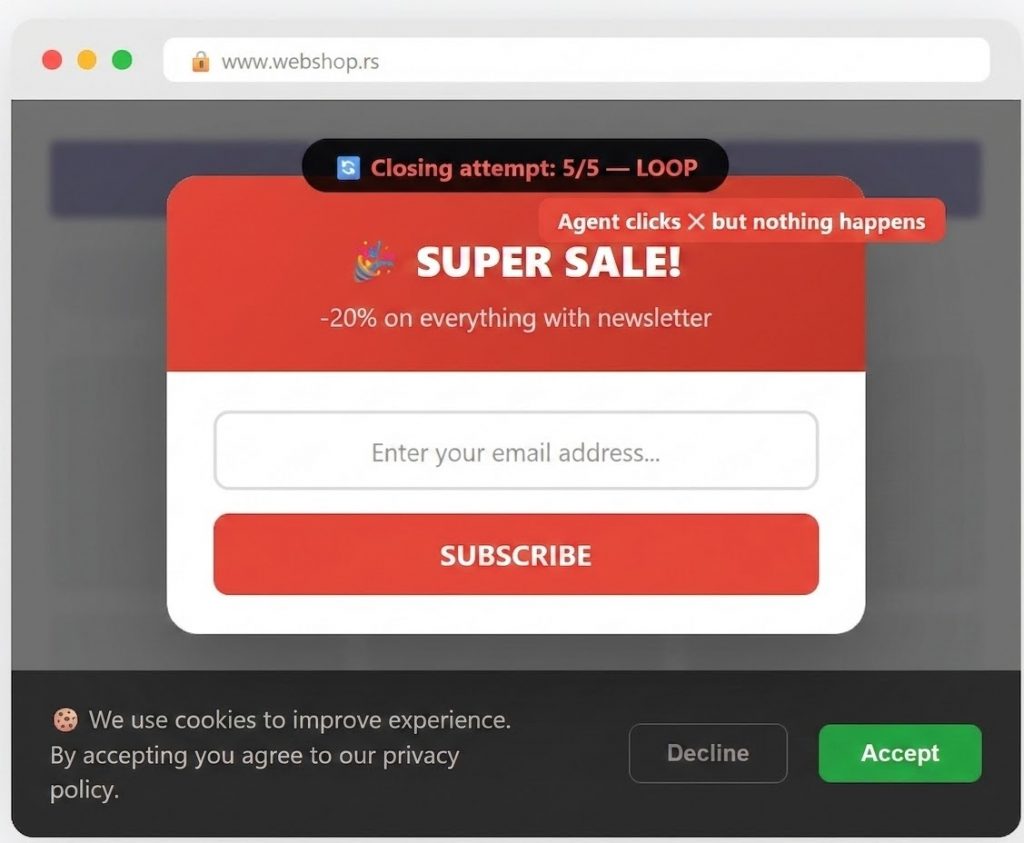

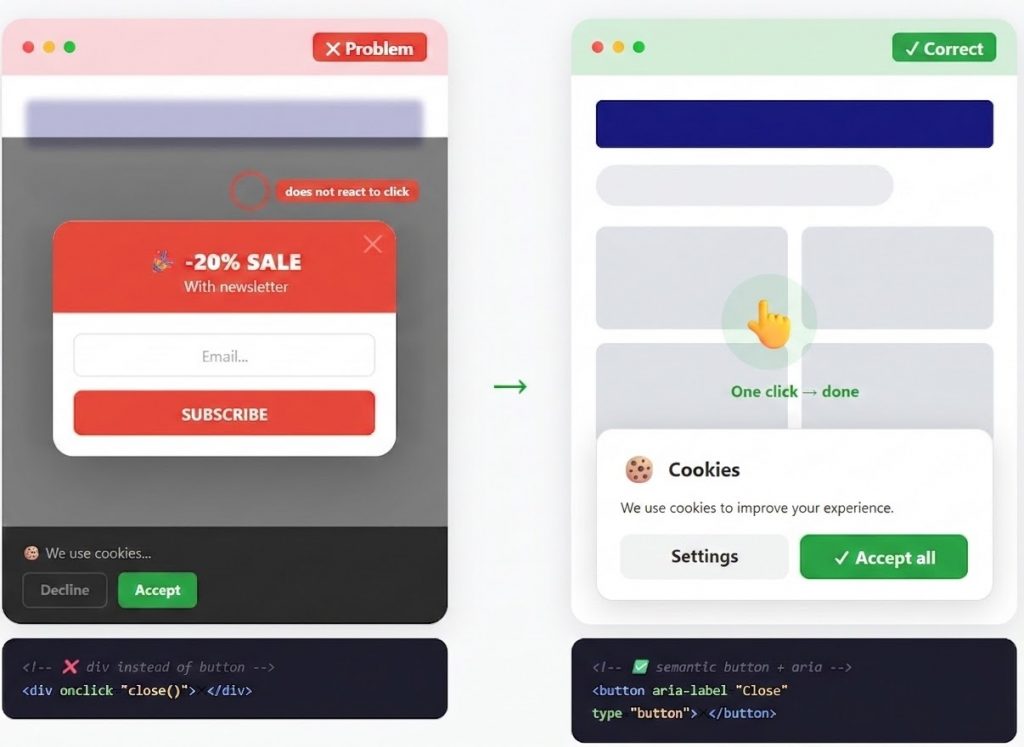

Popup and cookie walls

This was the most common issue across the tested journeys. In several cases, the agent spent multiple steps trying to close promotional overlays or cookie banners before it could even start the actual task. In the worst cases, stacked overlays created a trap that blocked progress entirely.

Popup/cookie problem

This is easy to dismiss as an “agent problem,” but it is a real UX signal. If a layer is hard to close, poorly implemented, or visually unclear for the agent, it is usually not creating a clean experience for human users either, especially on smaller screens or slower devices.

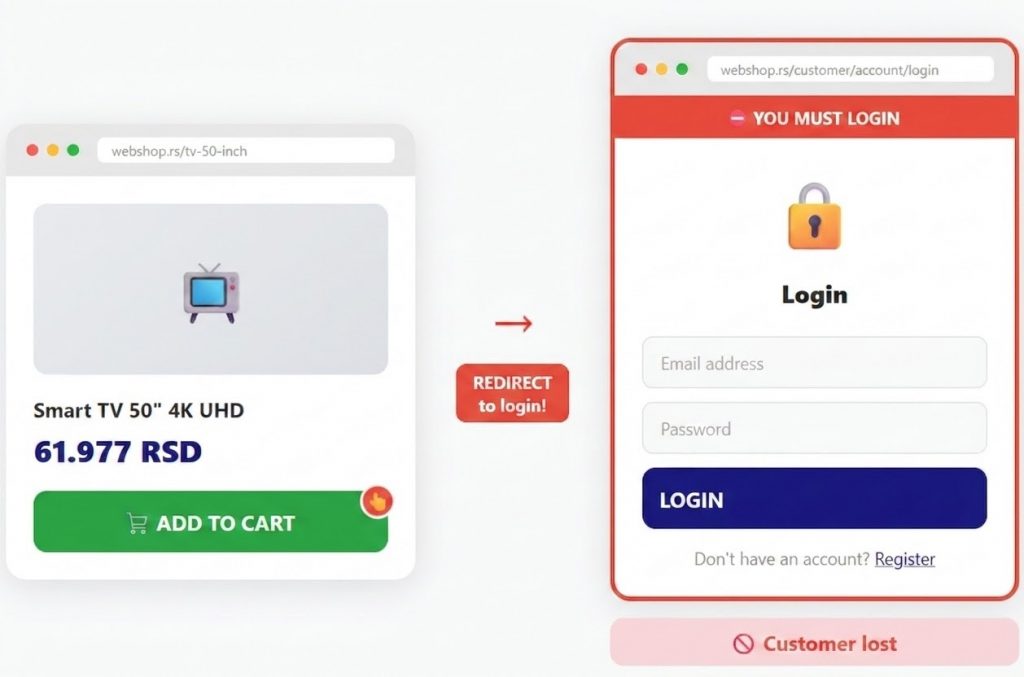

Forced registration

In some flows, the journey moved toward a login or registration wall too early. That is one of the most expensive forms of friction because it interrupts purchase intent with an unnecessary decision point.

Forced login

If users want to buy, let them move forward first. Credential capture can wait.

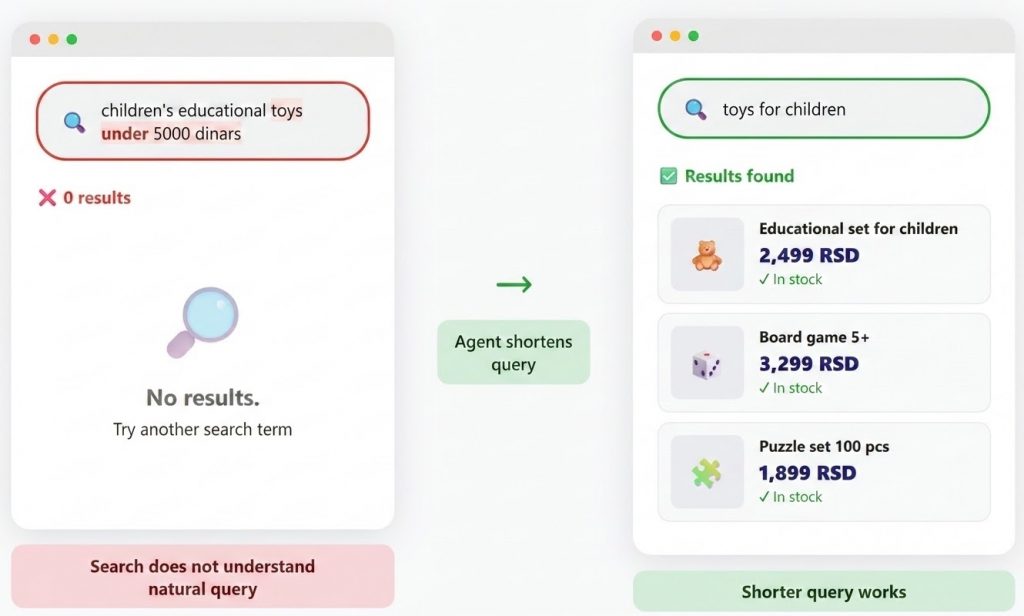

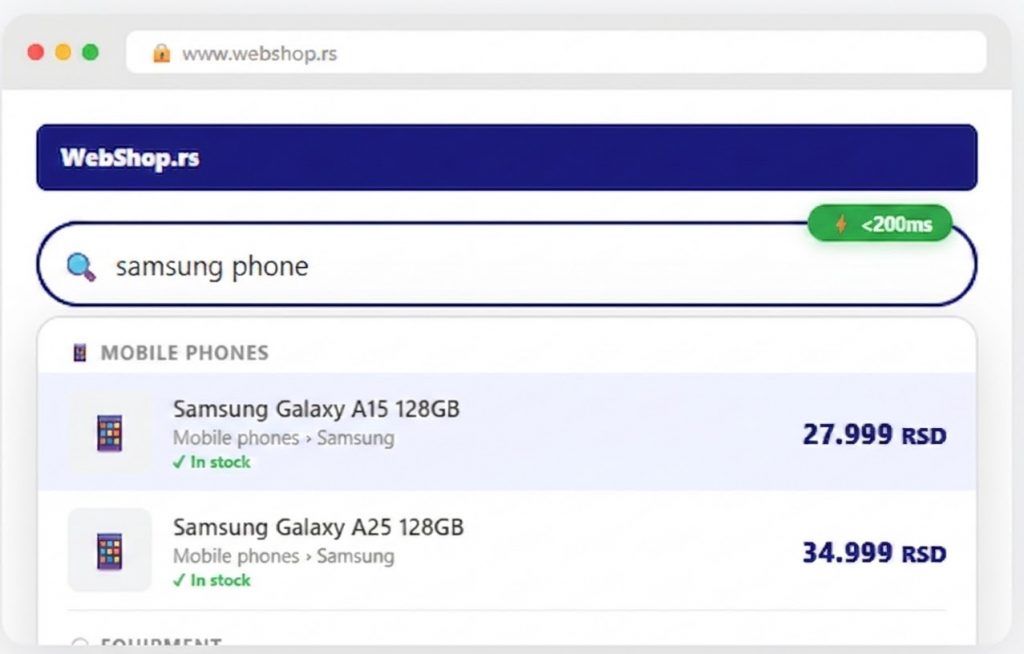

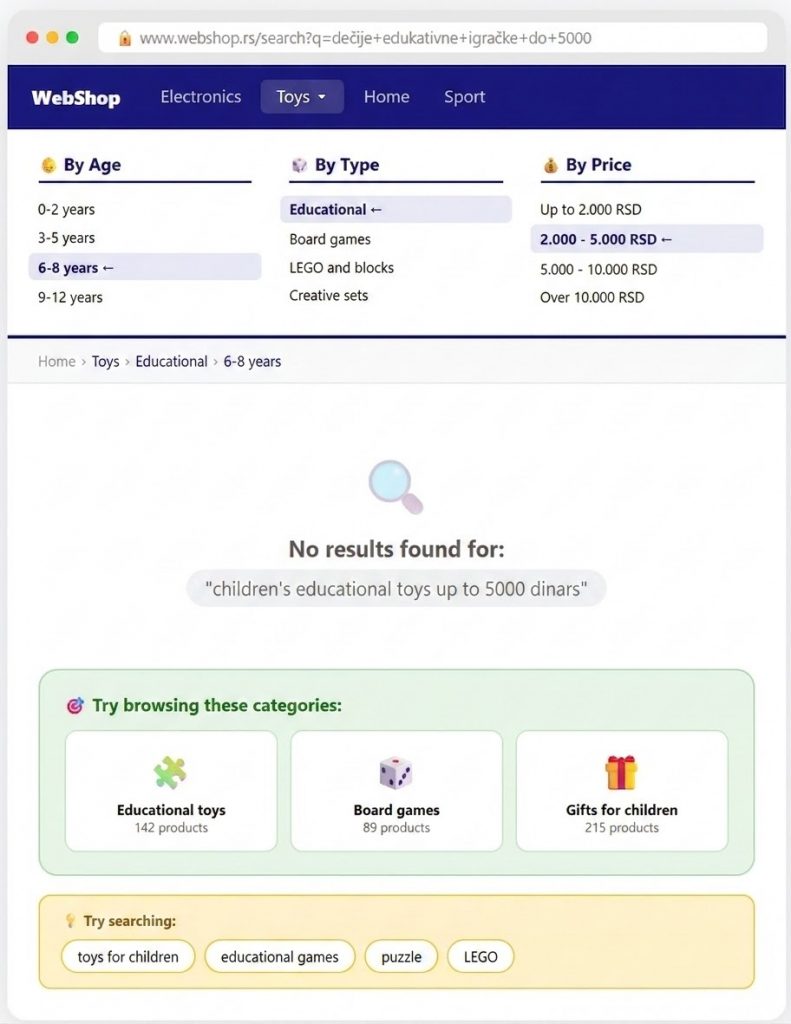

Search that fails on natural-language intent

This was another consistent pattern. When the query was short and category-like, the results were often usable. When the query was longer and more natural, the experience got weaker. That matters because AI agents do not search the way experienced local users do. They tend to use full, well-formed requests. Increasingly, human users do the same through voice, chat, or longer search behavior.

Search breaks on natural-language queries

If your search only works when the user already knows how to speak your internal product language, it is weaker than it looks.

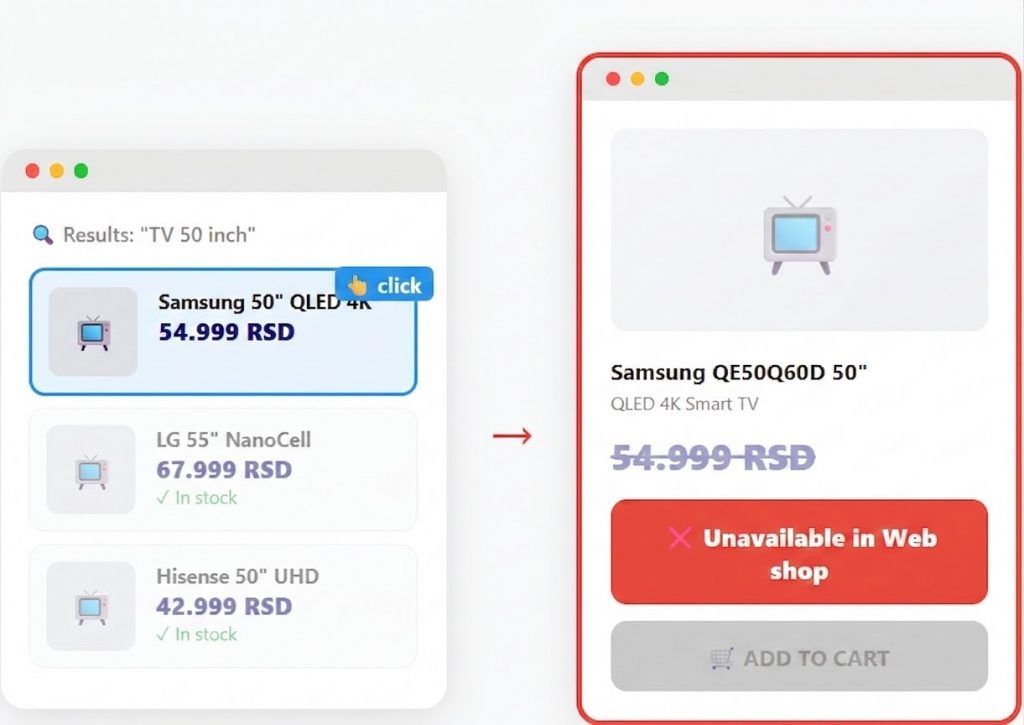

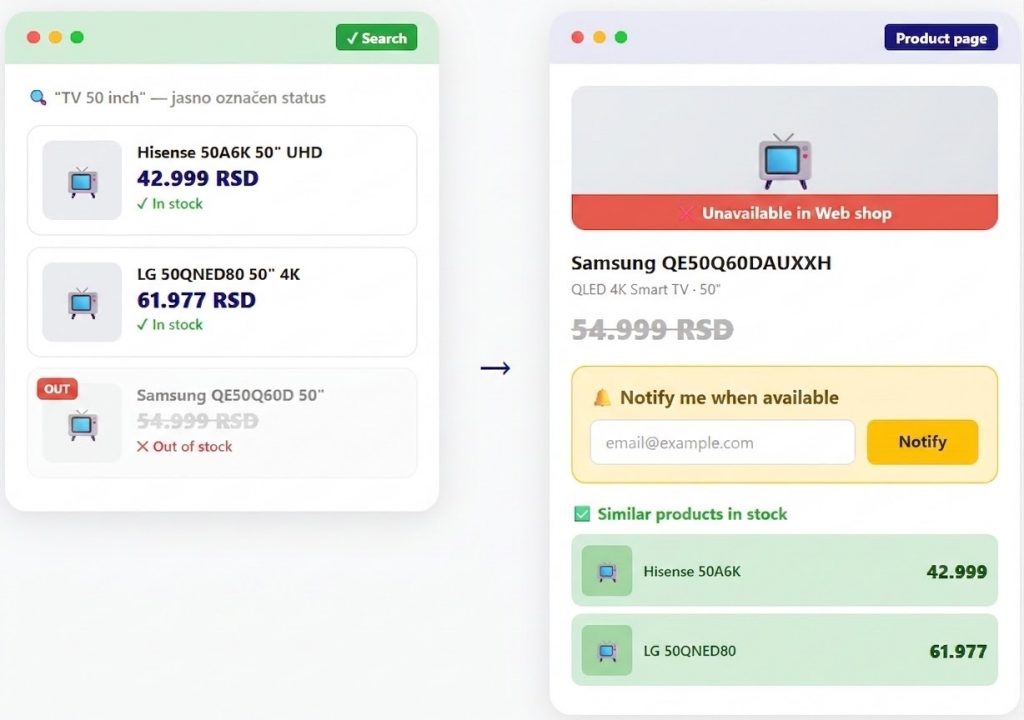

Out-of-stock products revealed too late

In some cases, the agent found what looked like a relevant product, clicked into it, and only then discovered that the product was unavailable. That adds wasted steps and weakens trust. Stock clarity should appear earlier in the journey, not after the click.

Unavailable products

The same applies to human users. If the flow repeatedly leads them into dead ends, the catalog may look large while the buying experience feels unreliable.

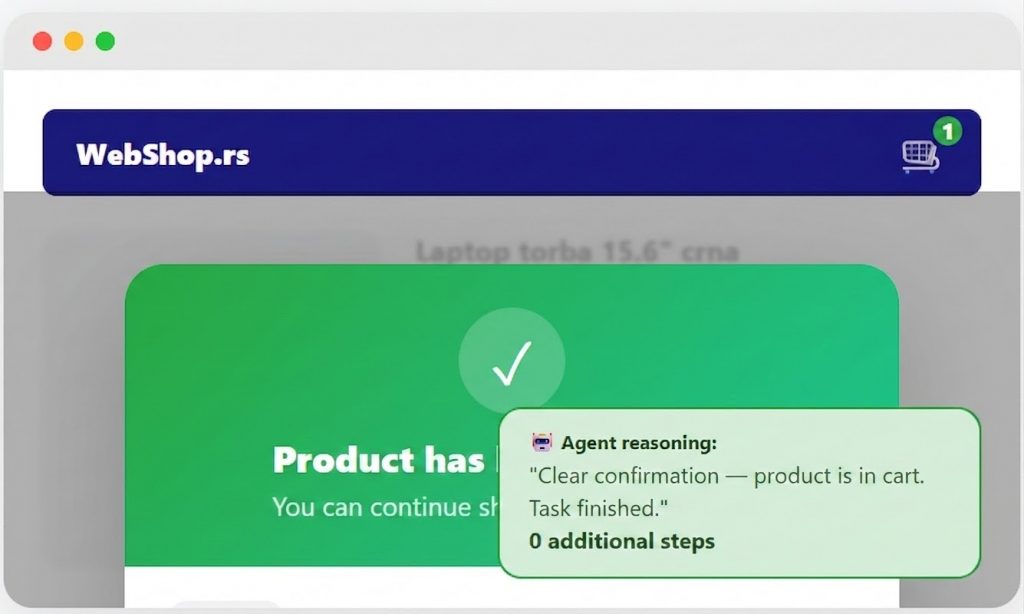

Weak add-to-cart confirmation

When the agent added a product to cart but did not get strong confirmation, it often needed extra verification steps. It had to check the mini-cart, review the cart icon, or reopen the cart to be sure the action worked. Clear feedback removes that uncertainty immediately.

This is a small implementation detail with an outsized impact. A simple confirmation message can save multiple steps and reduce hesitation.

One of the most interesting findings: the agent settled when the system gave it poor options

One of the more revealing moments in the research was not a technical failure. It was a reasoning failure.

In one scenario, the agent had to find a suitable gift for a seven-year-old within a budget. At one point, it explicitly recognized that the visible result was not a strong match for the task. In the next step, it still justified the choice and moved forward with it. In other words, it lowered its own standard in order to complete the run. The task did not produce a good outcome, but it did produce a convenient one.

That behavior matters because it shows two things at once.

The first is an AI limitation. Agents can rationalize weak decisions when they do not find a strong path.

The second is a structural e-commerce problem. If search, categorization, and product clarity do not guide the system toward a relevant option, weak substitutions become more likely. That is true for agents, but it is also true for rushed human buyers who are tired, distracted, or under time pressure.

What this reveals about product discovery

The test also surfaced something important beyond on-site UX.

In the scenarios that started from Google, the agent did not consistently gravitate toward the biggest generalist players. It often preferred more specialized stores. That suggests a simple point: in agent-assisted discovery, visibility and clarity can matter more than brand scale alone. Relevant product signals, category focus, and structured product context can improve your chances of being selected earlier in the journey.

That does not mean the rules of e-commerce have suddenly changed. It does mean discovery is getting another filter layer. If the path to your product is weak, confusing, or too dependent on human interpretation, that weakness becomes easier to expose.

What I would fix first on any web shop after this test

If I were using this research as an audit starting point, I would begin with five things.

1. Interaction layers

Review every popup, cookie banner, modal, and dismiss action. Make sure these elements are accessible, predictable, and easy to close.

2. Search behavior

Reduce zero-result dead ends. Improve matching for natural-language queries. Add better fallback behavior when search confidence is weak.

3. Stock visibility

Show product availability earlier and more clearly. Do not force users into dead-end clicks.

4. Add-to-cart feedback

Make success explicit. Confirm that the product has been added and reflect that state clearly in the UI.

5. Returns and support access

Critical information should not require a scavenger hunt. The path to returns, complaints, and support should be easy to find and easy to understand.

These are baseline e-commerce quality improvements that become more visible when an agent is part of the journey.

Final thought

AI agents are still early. In our region, they are nowhere near dominant enough to justify panic or overreaction.

But they are already useful.

They expose where a journey depends too much on user patience, where a “success” signal hides a weak outcome, and where the structure of a web shop makes buying harder than it should be. That is why I see this less as a future-commerce curiosity and more as a practical friction audit for e-commerce teams that want a clearer view of how their shop actually behaves under pressure.

Download the full presentation

If you want the full deck from the Above Commerce presentation, you can download it below.